Member-only story

Azure Databricks

Company founded by the creators of Apache Spark. Databricks makes use of Apache Spark to provide a Unified Analytics platform.

Why do we need Azure Databricks?

- To make use of Apache Spark we need to provision the machines install the spark, and the necessary libraries and maintain the scaling and availability of the machines.

- With Databricks, the entire environment can be provisioned with just a few clicks.

Three main components

- Databricks tools, services, and optimization.

- Distributed computation

- DBFS (Files)

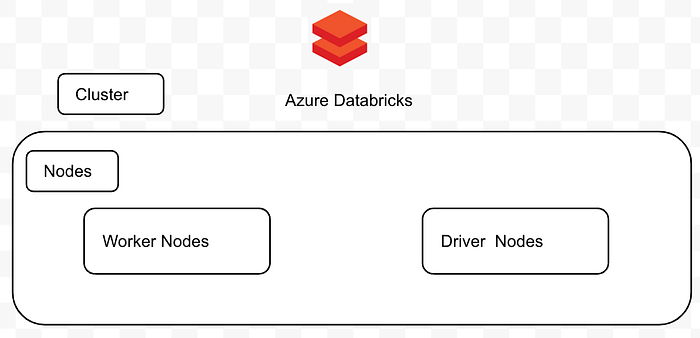

Databricks Infrastructure

Azure Databricks workspace is a single cluster with multiple nodes. The cluster will have the spark engine and other components installed.

The cluster contains two types of nodes:

- Worker/ Slave Nodes

Node is responsible for actually performing the underlying tasks.

2. Driver / Master Nodes

(i) Entry point to the node or the Pyspark application.

(ii) Node is responsible for distributing the task to the worker nodes.

3. Cluster Manager

Responsible for managing the resources.

In Databricks, we have two types of clusters:

Interactive cluster

We can analyze data with the help of an interactive cluster. Multiple users can use a cluster and collaborate.

There are two types of interactive clusters:

1. Standard cluster

Cluster for a single user. A fault by one user can impact the whole cluster. Resources are allocated to a single workload.

2. High concurrency cluster

Cluster for multiple users. Resources are shared across the users. Fault isolation is maintained. Access control on the table is also provided.

Job cluster